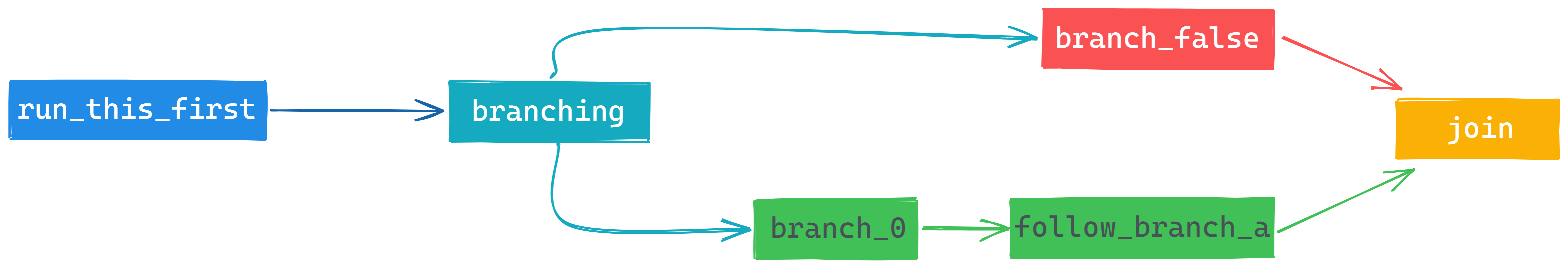

Its ability to meet the needs of simple and complex use cases alike make it both easy to adopt and scale. Optional success and failure callables are called with the first cell returned as the argument. It will keep trying until success or failure criteria are met, or if the first cell is not in (0, 0,, None). Today it supports more than 70 providers, including AWS, GCP, Microsoft Azure, Salesforce, Slack, and Snowflake. Runs a sql statement repeatedly until a criteria is met. Poke_interval ( int) - Poke interval to check dag run status when wait_for_completion=True. Airflow was designed to make data integration between systems easy. Wait_for_completion ( bool) - Whether or not wait for dag run completion. When reset_dag_run=True and dag run exists, existing dag run will be cleared to rerun. When reset_dag_run=False and dag run exists, DagRunAlreadyExists will be raised. This is useful when backfill or rerun an existing dag run. Airflow 2.0 is out How it works, what are the new features, what can do with your DAGs, to answer all those questions, you need to run Airflow 2.0.What is t. Reset_dag_run ( bool) - Whether or not clear existing dag run if already exists. Trigger_dag_id ( str) - the dag_id to trigger (templated)Ĭonf ( dict) - Configuration for the DAG runĮxecution_date ( str or datetime.datetime) - Execution date for the dag (templated)

But the upcoming Airflow 2. Triggers a DAG run for a specified dag_id Parameters Apache Airflow 2.0 Tutorial Apache Airflow is already a commonly used tool for scheduling data pipelines. TriggerDagRunOperator ( *, trigger_dag_id : str, conf : Optional = None, execution_date : Optional ] = None, reset_dag_run : bool = False, wait_for_completion : bool = False, poke_interval : int = 60, allowed_states : Optional = None, failed_states : Optional = None, ** kwargs ) ¶ name = Triggered DAG ¶ get_link ( self, operator, dttm ) ¶ class _dagrun. It allows users to accessĭAG triggered by task using TriggerDagRunOperator. Update the values for server_name with your own server's domain or IP address. Paste the following setting into that file. sudo nano /etc/nginx/sites-available/airflow Switch back to the root user by typing exit.Ĭreate a new Nginx config file in the /etc/nginx/sites-available directory named airflow. Now, Airflow should be set, you can test it via CLI: echo "export PATH=$PATH:$HOME/.local/bin" > ~/.bashrc local/bin directory to your $PATH variable. On Ubuntu20 pip will install the Airflow executable in /home/ubuntu/.local/bin, and by default, this directory is not searchable when running commands. Now we are ready to install Airflow via pip3 AIRFLOW_VERSION=2.0.1 Then, switch to airflow user and cd into their home directory. See the NOTICE file distributed with this work for additional information regarding copyright ownership. The best practice is to do not run Airflow under the root user, therefore let's create a dedicated one using adduser command: sudo adduser airflow Source code for Licensed to the Apache Software Foundation (ASF) under one or more contributor license agreements. The createuser command will ask you for a password. Sudo -u postgres bash -c "createuser airflow -pwprompt" sudo -u postgres bash -c "createdb airflow" When Postgres installed we need to run commands for creating a new database and user. We will be using Postgres for Airflow's metadata database. Now we are ready to install few essential packages.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed